Introduction

Unit 42 recently got hands-on with frontier AI models, and our initial findings indicate a major shift in the speed, scale and capability of AI models to identify software vulnerabilities. We are now seeing the first frontier models to demonstrate the autonomous reasoning required to function not merely as a coding assistant, but as a full-spectrum security researcher. This brings worrisome advancements in:

- Autonomous zero-day discovery

- Collapsing the patching window for N-days

- Advanced chaining of complex exploitation paths

- Real-time adaptation to bypass controls of hardened environments

The impact of frontier AI models on the threat landscape goes way beyond vulnerability discovery and exploitation. As these models become widely available in the near future, we are likely to see dramatic increases in the speed and scale of AI-enabled attacks across the entire attack lifecycle.

Frontier Models Exposing the Fragility of Our Software Ecosystem

As discussed at length by our colleagues at Anthropic, frontier AI models are a significant advancement in the capabilities of AI models. These models can, with minimal human expertise, identify vulnerabilities in systems and software. They can also analyze attack paths, including identifying complex exploit chains.

Our initial threat assessment is that frontier AI models will significantly increase the risk of zero-day and N-day vulnerabilities in software. They lower the barrier to entry for unskilled attackers to find complex exploit chains, while also dramatically accelerating the vulnerability discovery-to-exploitation cycle.

Open Source Software and Supply Chain Risks

Open source software (OSS) in particular may face significant risks from frontier AI models, at least in the short term. It has traditionally been considered that “given enough eyeballs, all bugs are shallow.” However, the transparency of exposing source code resulted in some striking observations in our tests of frontier AI models.

When we run them against source code, frontier AI models demonstrate a strong ability to identify vulnerabilities and complex exploit chains. When we test the models against compiled code (the executable version of code), however, we see only marginal advancements compared to publicly available AI models. Consequently, open-source software faces a greater immediate risk.

It is crucial to remember that nearly all commercial software incorporates open-source components within its compiled code.

To be clear, Unit 42 does not believe that OSS is inherently more vulnerable than commercially available software. We assess OSS has a heightened risk of being compromised due to the open nature of the software development ecosystem. This includes the availability of public source code for threat actors to rigorously test for vulnerabilities beyond the visibility of defenders, and the limited number of maintainers (and their time) for many OSS projects.

Unit 42 predicts an increase in large-scale supply chain compromises of OSS projects, similar to the recent TeamPCP supply chain attacks and North Korea’s attack on the Axios JavaScript library.

A New Frontier in AI-Enabled Attack Paths

Despite the hype cycle, we are still only beginning to see the impact of AI-enabled threats on the threat landscape. Yes, we have seen incredible gains in the speed and scale of attacks leveraging AI in multiple cases and through security researcher testing. To date, these incidents still represent a very small percentage of the overall threat activity Unit 42 tracks.

That said, threat actors continue to invest in AI research and testing capabilities. As we noted in our threat research into a few AI-related malware samples, we see threat actors testing AI for:

- Writing malware

- Remote decision making (e.g. augmenting or replacing a C2 operator)

- Local decision making (e.g. locally executed agentic attack flows)

With only a few notable exceptions, such as Anthropic’s reporting on GTG-1002 AI-enabled attacks against approximately 30 organizations and Amazon’s reporting on threat actors targeting edge devices at scale, the world has yet to see massive adoption of AI in large-scale campaigns.

With the advancements and public release of frontier AI models, Unit 42 believes the threat landscape is likely to see the rapid increase in speed, scale and sophistication of cyberattacks that we have warned about. Most critically, we don’t need to teach frontier AI models how to hack. They already know how to do it and can do it autonomously.

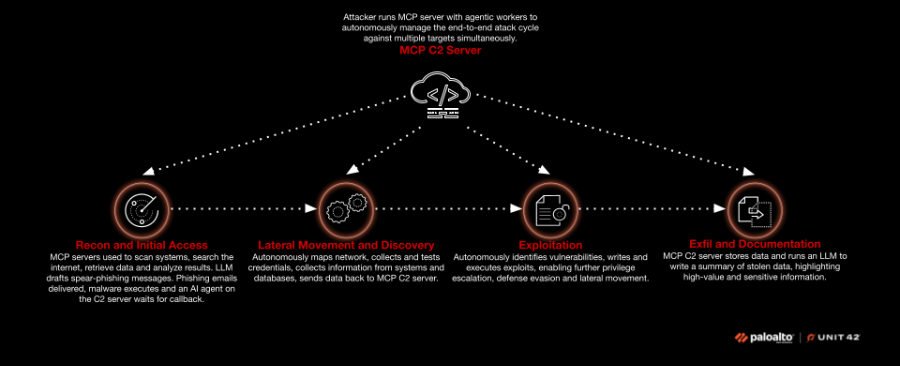

We will illustrate a few areas where we believe we will see advanced usage of AI using a common attack path. In this case, we will apply the thought experiment to spear phishing leading to data exfiltration for extortion:

- Reconnaissance: An attacker leverages frontier models to rapidly scrape the internet for targeting intelligence. This includes:

- Identifying key leaders and their contact information via press releases, LinkedIn and corporate websites

- Identifying software used in the environment via job postings, press releases for partnering agreements

- Finding other available information to inform the large language model (LLM) to write well crafted spear-phishing emails, texts or audio scripts for social engineering attacks

- Initial access: A human reviews the reconnaissance data and the draft phishing emails and sends them to targets with malware attached. An AI agent on the command-and-control (C2) server waits for the malware to check in after initial delivery.

- Lateral movement and discovery: A Model Context Protocol (MCP) server autonomously instructs the installed malware to:

- Scan inside the network

- Map what it can see

- Identify running software versions

- Gather exposed credentials on endpoints and in databases

- Move laterally across devices collecting sensitive data as it goes

The agent automatically tests each set of credentials as they are discovered, enumerates their privileges and tracks success/failure statistics automatically.

- Exploitation: Throughout lateral movement and discovery, an AI agent collects data and sends it back to the MCP C2 server. The agent analyzes the running services and applications, identifies vulnerabilities, writes custom exploit code and passes the exploit back to the onsite malware. The malware executes autonomously to continue its progress with privilege escalation, defense evasion and lateral movement across network segments.

- Exfiltration and documentation: The collected data is returned to an MCP server and dropped into a datastore. It is then analyzed by an LLM to automatically provide a summary of key findings to the human operator. These findings include an assessment of the value of the stolen dataset based on the operator’s intended use of the data.

Figure 1 illustrates the complete attack path.

It should be clear that we do not currently expect to see entirely new attack techniques created by AI. Rather, we see AI enabling attacks to move faster, autonomously and against multiple targets simultaneously.

It is the speed and scale of AI-enabled attacks that we need to prepare for as defenders, not completely unknown techniques.

We know how cyberattacks are carried out. We know the forensic evidence they leave behind. We need to shift to hardened environments that are designed for prevention and rapid response.

What Security Teams Should Do Right Now

Unit 42 recommends a thorough review of your current security policies to adopt an aggressive prevention and response mindset. Mitigations that rely on active monitoring and response prior to containment will be outpaced by AI-assisted adversaries.

- Operate under assumed breach conditions: Extend endpoint protection capabilities across all environments, preventing by default and monitoring at a minimum.

- Establish code visibility and governance: Strictly manage and track the origin sources of OSS and assume package registries are no longer safe. Create a software bill of materials (SBOM) for all software to enable rapid identification and patching of integrated code libraries. Implement version pinning, hash checking and cooling-off periods for updates.

- Harden development and build ecosystems: Restrict build systems from reaching the internet. Adopt secure vaults for developer secrets. Aggressively scan build environment and production networks for exposed secrets.

- Collapse the patching window: Transition from routine maintenance to urgent, "time-to-deploy" enforcement. Use auto-updates and out-of-band releases to counter the AI-accelerated N-day threat.

- Automate incident response pipelines: Deploy AI models to triage alerts, summarize technical events and conduct proactive threat hunts. Manual triage cannot scale to the volume of bugs a frontier AI model can discover.

- Refresh vulnerability disclosure policies (VDPs): Prepare for an unprecedented volume of bug reports. Organizations must have automated workflows to ingest, validate and prioritize vulnerabilities.

- Prioritize hard architectural barriers: Shift toward memory-safe languages and hardware-level isolation.

Conclusion

We are entering a period of significant volatility in the cybersecurity landscape. In the short term, the proliferation of frontier AI models capabilities risks empowering adversaries to exploit zero-days and N-days at an unprecedented scale. We are talking about N-hours instead of N-days. It is also likely to enable attackers to move at greater scale, sophistication and speed than ever before. However, this is just a transition period as defenders adapt to the new speed and scale of AI-enabled threats.

The ultimate goal of this transitory period is a future where defensive capabilities dominate, and where AI models are used to identify and fix bugs faster and earlier than threat actors. Unit 42 is committed to ensuring that defenders remain ahead of threat actors. We will continue to aggressively hunt, analyze and report threat intelligence to enable defenders.

Watch our live threat briefing from Thursday, April 16, as Sam Rubin, SVP, Consulting and Threat Intelligence, Unit 42, and Marc Benoit, CISO, Palo Alto Networks discuss how frontier AI models find and exploit previously undetected exposures at machine scale and speed, and share practical steps security leaders need to take now to adapt their defenses to avoid business disruption. Watch now.

Additional Resources

- Weaponized Intelligence – Nikesh Arora, Palo Alto Networks

- Defender's Guide to the Frontier AI Impact on Cybersecurity – Lee Klarich, Palo Alto Networks

- Introducing Unit 42 Frontier AI Defense – Sam Rubin, Palo Alto Networks

- Reclaim the AI Advantage – Unit 42, Palo Alto Networks

- Unit 42 Breaking Insights: Combat Risks from Frontier AI Models – On Demand Threat Briefing, Unit 42

- Assessing Claude Mythos Preview’s cybersecurity capabilities – Frontier Team Red, Anthropic

- Project Glasswing: Securing critical software for the AI era – Anthropic

Get updates from Unit 42

Get updates from Unit 42